-

Product

-

Inferencing Low-latency model inferencing at scale

-

Fine-tuning Customize models for your needs

-

GPU Clusters High-performance compute for AI tasks

-

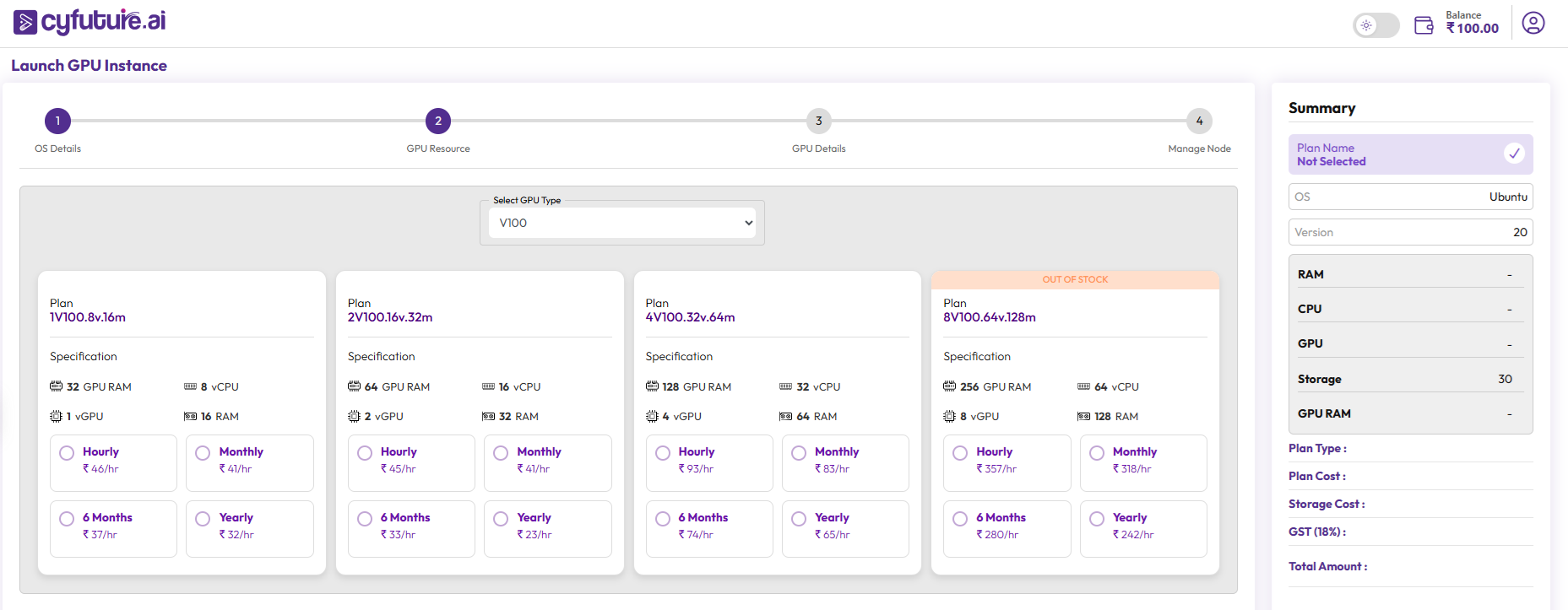

GPU as a Service Enterprise-Grade GPU Power

-

AI as a Service Your ideas, powered by instant AI.

-

AI Software Service Your ideas, powered by instant AI.

-

AI Chatbot Intelligent chat, real-time solutions

-

RAG Smart AI retrieval system

-

AI IDE Lab Build and test AI in one workspace

-

Serverless Inferencing Run AI inference instantly, without servers.

-

AI Agents Task-savvy, intelligent bots

-

Model Library Pre-trained AI models to use

-

Vector Database Smart data search for AI

-

Storage Secure, accessible object storage

-

Enterprise Cloud Robust infrastructure for heavy workloads

-

Lite Cloud Lightweight environment for small projects

-

AI Apps Hosting One-click AI app deployment

-

AI Apps Builder Build AI apps with low code

-

Pipelines Automate Everything, Effortlessly

-

Container as a Service Simplified container deployment at scale

-

Nodes Adaptive Compute Power

-

Datasets Datasets to accelerate your innovation

-

AI lab as a service Cloud-powered AI labs for modern innovation

-

Sales Agent AI-powered Sales Agent for high-impact sales communication

-

- Pricing

-

Voicebot

-

-

Product

AI Voice Agent -

Integrations

-

-

-

Industries

Retail -

Financial Services

-

Insurance

-

Healthcare

-

-

-

Solutions by Role

Voice Support -

Voice Sales

-

-

- Resources

-

Company

-

About Us Learn more about Cyfuture AI

-

Datacenters Secure, high-performance infrastructure

-

Certifications Our compliance & standards

-

Customers Trusted by leading businesses

-

Partners Grow with our partner network

-

Careers Join our growing team

-

Support We're here to help

-

Service Level Agreement Our uptime commitments

-

Contact Us Get in touch for inquires

-

- Login

- Get Started